Solving Hallucination: Where the Research Stands

A GenAI Newsletter by Raj

Hallucination is the single biggest barrier to deploying LLMs in production. Everyone working with these models knows this. What's less well understood is how much the research has matured. Three years ago, hallucination was treated as a mysterious failure mode. Today, researchers have identified the internal mechanisms that cause it, built benchmarks that measure it across specific domains, and developed mitigation techniques that reduce it from 50%+ to low single digits in controlled settings.

The problem is not solved. Models still hallucinate at rates that make them dangerous in legal, medical, and financial applications. The agentic setting makes everything worse. But the field has moved from "why does this happen" to "here is the engineering stack that manages it," and the trajectory of the research is worth mapping.

What We Know About Why Models Hallucinate

The vague explanation ("next-token prediction sometimes produces plausible but wrong tokens") has been replaced by specific mechanistic findings.

Two internal failure modes

Research across multiple language models has identified two distinct mechanisms at the neuron level (Comprehensive Survey, arXiv 2510.06265):

Knowledge enrichment failures in lower-layer MLPs. The lower layers of a transformer are responsible for retrieving factual knowledge associated with the subject of a query. When these layers have insufficient or contradictory information (because the training data was sparse or conflicting), the model generates a plausible-sounding fabrication. The model has no internal signal that it's making something up. The information simply isn't there.

Answer extraction failures in upper-layer attention heads. Even when lower layers successfully retrieve correct knowledge, upper-layer attention heads sometimes fail to select the right fact from what's available. The knowledge exists in the model's internal state, but the selection mechanism picks the wrong piece. This is closer to a lookup bug than a knowledge gap, and it explains why models sometimes hallucinate on topics they demonstrably "know."

The RAG override problem

The ReDeEP paper (presented at ICLR 2026) identified a third mechanism specific to retrieval-augmented generation: Knowledge FFNs overpower Copying Heads. When the model has both parametric knowledge (from training) and retrieved knowledge (from documents), the feedforward networks encoding parametric knowledge can dominate the residual stream, causing the model to ignore the retrieved content. This explains a frustrating production failure: RAG systems hallucinating on exactly the questions the retrieved documents were supposed to answer, because the model's memory overrides the evidence in front of it.

The confidence problem

MIT researchers found (January 2025) that models are 34% more likely to use phrases like "definitely," "certainly," and "without doubt" when generating incorrect information. OpenAI's September 2025 paper showed that standard training objectives and leaderboard metrics actively reward this behavior: models learn to bluff because bluffing scores better on benchmarks than saying "I don't know."

This means the most dangerous hallucinations are the ones that sound most confident. Current evaluation methods are biased toward rewarding exactly the wrong behavior.

How Bad Is It? Domain by Domain

The Vectara Hallucination Leaderboard (37+ models, 7,700+ articles) reports aggregate hallucination rates of 15-52% across models, with most clustering in the 20-27% range. But aggregates mask the real story. Hallucination severity varies enormously by domain, and the domains where accuracy matters most are the ones where models perform worst.

Legal is the most dangerous case studied. Stanford RegLab and HAI tested LLMs on specific legal queries and found hallucination rates of 69-88%. On questions about a court's core ruling, models hallucinate at least 75% of the time. Purpose-built legal AI tools don't fully solve this: Lexis+ AI produced incorrect information 17% of the time, Westlaw AI-Assisted Research 34%. The failure mode that gets the most attention is fabricated citations (case names, docket numbers, and holdings that don't exist), but the deeper problem is subtle misstatement of legal holdings where the case exists but the model mischaracterizes what it decided.

Medical hallucination is measured most rigorously by the MedHallu benchmark (10,000 QA pairs from PubMedQA). The best model achieves F1 of only 0.625 on detecting hard-category hallucinations. In production, healthcare AI systems show 10-20% hallucination rates depending on task type (Suprmind Research Report). Drug interaction queries and treatment protocol recommendations sit at the higher end (15-20%). Diagnostic queries are closer to 10%, partly because diagnosis is more constrained by the presented symptoms.

Financial applications run 15-25% hallucination rates without mitigation, dropping to 3-8% with production RAG systems (Suprmind). The PHANTOM benchmark specifically tests hallucination in long financial documents like SEC filings, where short-context benchmarks don't predict actual performance. A finding that should concern anyone building financial AI: four out of six leading models fabricate financial data when source documents are incomplete, and two of those do so confidently, without disclosure, in a format that looks authoritative.

Code generation hallucinates differently. Models hallucinate 12.1% of function names in standard benchmarks. On adversarial prompts using fake library names, hallucination rates reach 99%. The practical impact: code that compiles but calls nonexistent APIs or imports phantom packages.

The best case is grounded summarization (restating a provided document faithfully), where top models achieve 0.7-1.5% hallucination rates. The 100x gap between this and the legal domain's 69-88% tells you how much the task constrains the problem.

The Agentic Hallucination Problem

All of the above applies to a model generating text that a human reads. In agentic systems, the model generates text and then acts on it. The error becomes an action before anyone reviews it.

The first comprehensive survey of agent hallucinations (arXiv 2509.18970) identified 18 triggering causes and proposed a taxonomy for agent-specific failures. The ICLR 2026 workshop "Agentic AI in the Wild" (April 27, Singapore) is devoted to this topic. Three failure modes specific to agents have been defined:

Cascading hallucination. An agent hallucinates one fact early in a multi-step workflow. Each subsequent step builds on the error. The OWASP ASI08 guide on cascading failures documents a concrete case: an inventory agent invents a nonexistent SKU, then calls four downstream APIs to price, stock, and ship the phantom item. Every API call succeeds (HTTP 200). Traditional monitoring sees nothing wrong. The workflow is semantically broken but technically healthy.

Silent hallucination. A paper submitted to the ICLR 2026 workshop identifies a failure mode where the hallucinated belief never appears in the agent's output. The agent generates an internal false assumption that shapes its subsequent tool calls and interpretations without being stated as text. Because the belief is never surfaced, output-level detection methods can't catch it. This class of failure requires monitoring internal representations, which is an active research problem.

Trajectory divergence. Documented in "Beyond Fluency: Toward Reliable Trajectories in Agentic IR", this occurs when the agent's stated reasoning and its actual tool calls drift apart. The chain-of-thought says one thing. The tool call does another. The reasoning looks coherent. The action looks valid. The mapping between them is broken, and linguistic fluency masks the misalignment.

The AgentHallu benchmark (693 agent trajectories, 7 frameworks, 5 domains) is the first systematic measurement framework for these failures. Its key contribution is hallucination attribution: identifying not just that a hallucination occurred, but which specific step in the agent's trajectory caused it, across 5 categories (Planning, Retrieval, Reasoning, Human-Interaction, Tool-Use) and 14 sub-categories.

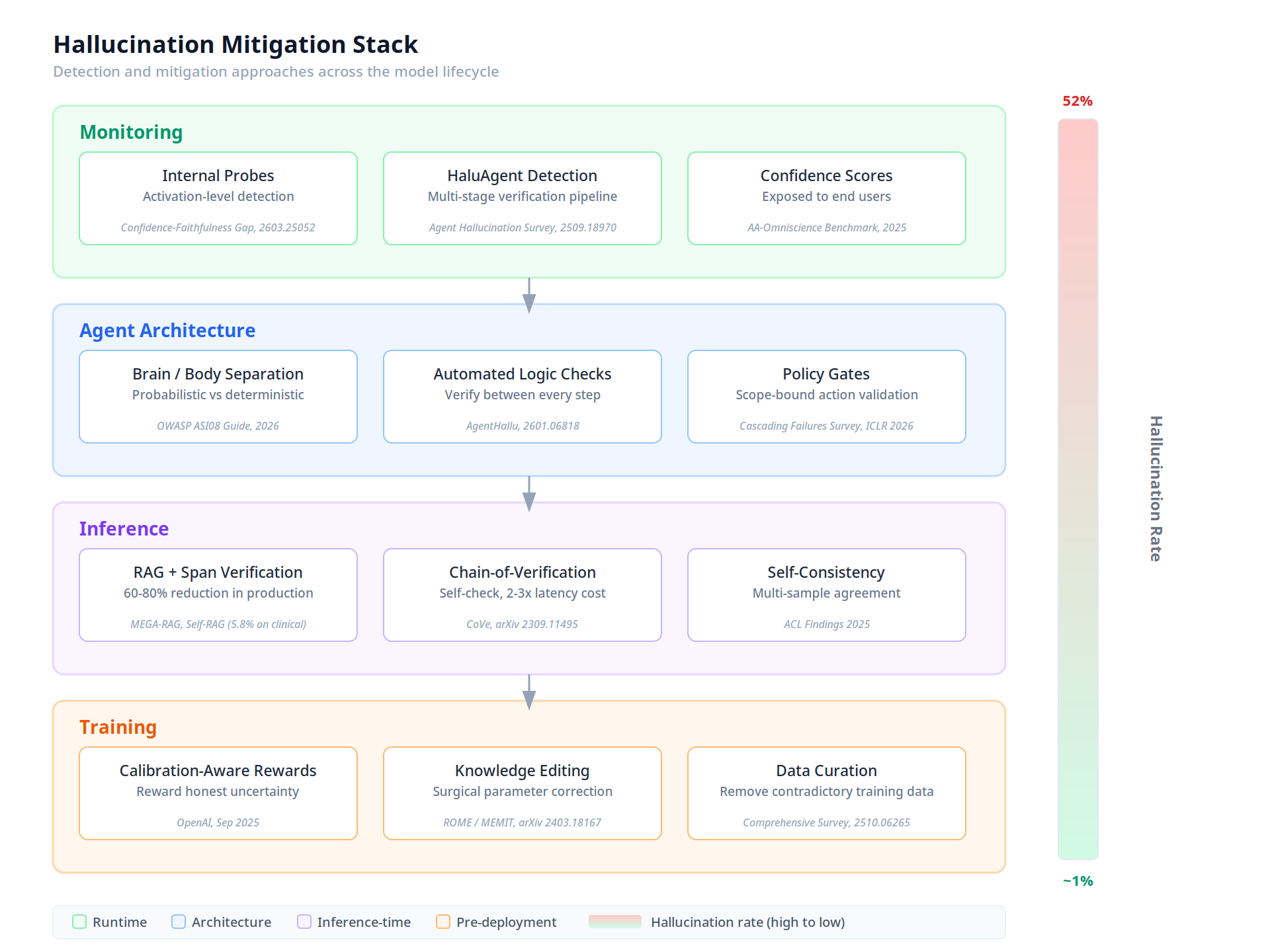

The Mitigation Stack

The diagram below shows how current detection and mitigation techniques layer across the model lifecycle, with citations for each approach:

Training-time

Calibration-aware rewards (OpenAI, September 2025) change the training signal to value honest uncertainty over confident bluffing. The AA-Omniscience benchmark (6,000 questions, 42 topics) was designed specifically to penalize wrong answers more harshly than admitting "I don't know."

Knowledge editing using ROME and MEMIT locates specific model parameters storing a particular fact and surgically corrects them without full retraining. Effective for known factual errors. Does not address the broader problem of generating plausible-sounding content on topics with sparse training coverage.

Inference-time

RAG with span-level verification is the highest-impact intervention available in production today. Plain RAG reduces hallucination by 60-80%. Self-reflective RAG (generate, identify unsupported claims, revise using only cited passages) achieved 5.8% hallucination on 250 clinical vignettes. The MEGA-RAG framework extends this for public health with multi-source retrieval and dynamic knowledge editing.

Chain-of-Verification (CoVe) (arXiv 2309.11495). The model drafts a response, generates verification questions about its own claims, answers those questions independently (so answers aren't biased by the draft), and revises. The independence of the verification step is what makes it effective. Adds 2-3x latency.

Agent architecture

Brain/body separation (OWASP ASI08). Probabilistic reasoning (LLM) is strictly separated from deterministic execution (tool calls). Hallucinations in reasoning can't directly become actions without passing through a verification layer.

Automated logic checks between steps are the primary defense against cascading hallucination. The AgentHallu work shows that catching errors at step 2 prevents propagation to steps 3, 4, and 5.

Runtime monitoring

Internal probes. The "Closing the Confidence-Faithfulness Gap" paper (arXiv 2603.25052) found that calibration and confidence are encoded as separate, orthogonal directions in the model's residual stream. Linear probes trained on internal activations can detect hallucination without external knowledge, because the model's internal state carries a different signal for faithful vs. hallucinated output even when the text sounds equally confident.

HaluAgent (described in the agent hallucination survey). An autonomous detection agent built on small open-source LLMs that segments responses into claims, verifies each using external tools (web search, calculators, code interpreters), then applies reflective reasoning. Using a different model for verification avoids the fundamental problem of asking a model to audit its own output.

Active Research Directions

Several research programs are actively working on the open problems:

Scaling mechanistic detection to real-time. The ReDeEP team's work on detecting hallucination through mechanistic interpretability works in research settings. Scaling it to production inference speeds is an engineering challenge being pursued across several labs. Anthropic's interpretability program reports that understanding circuits still takes hours of human effort on short prompts. MIT Technology Review named mechanistic interpretability one of the 10 breakthrough technologies of 2026.

Hallucination attribution in multi-agent systems. The AgentHallu group is extending their attribution framework to multi-agent pipelines where Agent A's output feeds Agent B. Tracing which agent in which step introduced a hallucination across a multi-agent workflow is an unsolved attribution problem.

Domain-specific detection. MedHallu for medicine, PHANTOM for finance, and Large Legal Fictions for law are building domain-specific benchmarks because general hallucination metrics don't predict domain performance. The BullshitBench v2 benchmark added 100 questions across coding, medical, legal, finance, and physics specifically to surface domain-level failures that aggregate scores hide.

Learning to abstain. If models can't eliminate hallucination, can they learn to say "I don't know" when they're likely to be wrong? Current training penalizes abstention. Calibration-aware training and AA-Omniscience are steps in this direction, but the tension between useful helpfulness and honest uncertainty remains an active research area.

Silent hallucination detection. The ICLR 2026 workshop paper identifying silent hallucinations opened a new research direction: detecting false beliefs that exist in the agent's internal state but never surface in output. This requires monitoring internal representations during agent execution, which is connected to the mechanistic interpretability program but applied in a real-time agentic setting. No production system currently does this.

The Startups Building Solutions

| Company | Funding | Focus |

|---|---|---|

| Galileo | $68.1M | AI observability platform. Detects hallucinations, drift, and bias across the deployment lifecycle. |

| Patronus AI | $17M | Automated detection of hallucinations, copyright violations, and safety risks at scale. |

| Vectara | Funded | RAG platform with built-in hallucination minimization. Maintains the Hallucination Leaderboard. |

| Cleanlab | Funded | Trust scores per answer. Checks faithfulness to source context with outlier surfacing. |

| Nava | $8.3M | Security for autonomous agent payments. Prevents financial agents from acting on hallucinated data. |

Key Research Papers:

- LLM-based Agents Suffer from Hallucinations: Survey — First comprehensive taxonomy, 18 triggering causes

- AgentHallu Benchmark — 693 trajectories, 7 frameworks, automated attribution

- Silent Hallucinations in Agentic AI — ICLR 2026 workshop: hidden failure modes

- ReDeEP — Mechanistic interpretability for RAG hallucination (ICLR 2026)

- Large Legal Fictions — 69-88% hallucination on legal queries

- 172 Billion Token Study — Rates across temperatures, context lengths, hardware

- Chain-of-Verification — Self-verification method

- MedHallu — 10,000 medical QA pairs, best F1 = 0.625

- PHANTOM — Financial long-context hallucination benchmark

- Confidence-Faithfulness Gap — Orthogonal encoding of calibration vs confidence

- Vectara Hallucination Leaderboard — 37+ models, 7,700+ articles

- ICLR 2026 Workshop: Reliable Agentic AI — April 27, Singapore